Apple is preparing to fundamentally reshape how users interact with their iPhones through its upcoming iOS 27 operating system. According to reports from Bloomberg’s Mark Gurman, the update will center on a significant overhaul of the camera system, driven by deeper integration of Visual Intelligence and a more capable, context-aware Siri.

This shift marks a transition from simple photo capture to intelligent visual analysis, positioning the iPhone not just as a camera, but as an active information processor.

Зміст

The Evolution of Visual Intelligence

Apple introduced Visual Intelligence with the iPhone 16 in 2024, allowing users to extract and analyze data directly from images. iOS 27 is expected to expand these capabilities significantly. Rather than being a separate tool, Visual Intelligence will be woven directly into the native photo and video modes.

Key anticipated features include:

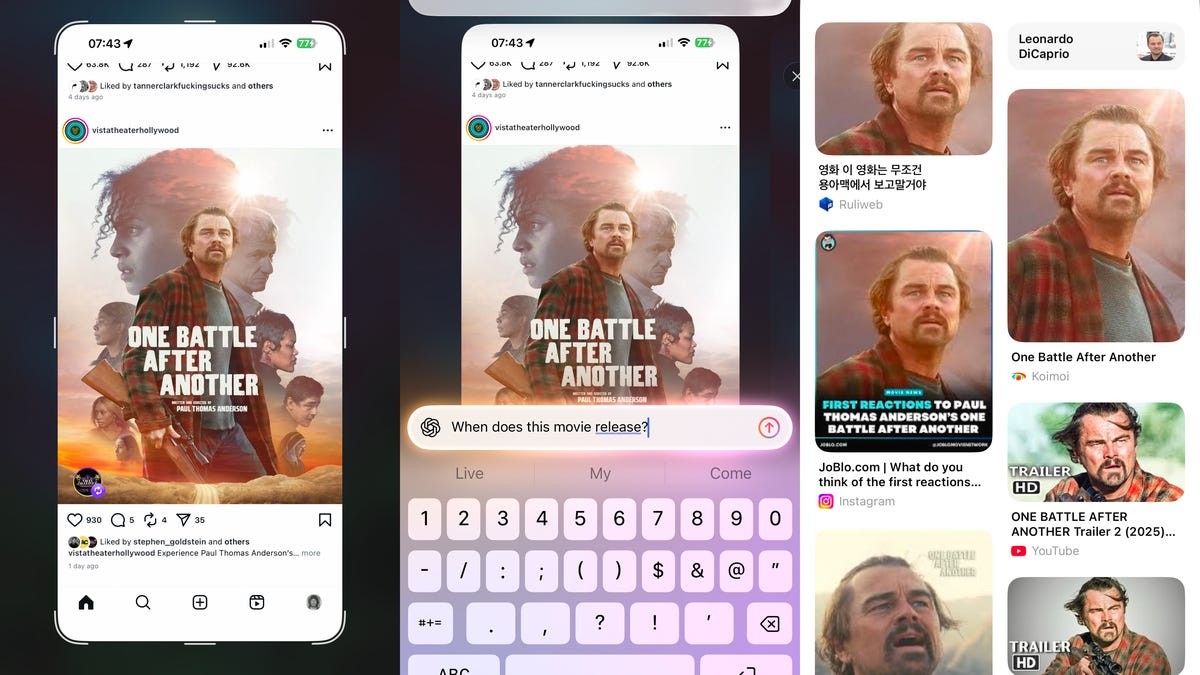

– Direct AI Integration: Potential connectivity with ChatGPT to answer complex questions about visual content.

– Health and Lifestyle Tracking: Enhanced ability to scan nutrition labels for dietary tracking, turning a simple photo into actionable health data.

– Seamless Analysis: The AI will work in the background, identifying objects, text, and contexts without requiring manual intervention from the user.

This development matters because it moves AI from a novelty feature to a core utility, helping users process visual information faster and more accurately than ever before.

A Smarter Siri for Camera Control

Alongside visual enhancements, Siri is undergoing a major transformation. Previously reported as a potential standalone AI chatbot, the new Siri is expected to function more deeply within the iOS ecosystem, particularly in controlling the camera.

Users will reportedly be able to use voice commands to seamlessly switch between modes—such as photo, video, and portrait—without navigating through menus. This integration addresses a common user pain point: the friction between seeing a moment and capturing it correctly. By allowing Siri to interpret intent and adjust settings automatically, Apple aims to make the photography experience more intuitive and hands-free.

Beyond the iPhone: Hardware Implications

The software updates in iOS 27 are likely precursors to broader hardware announcements expected at the 2026 Worldwide Developers Conference (WWDC) in June. Bloomberg suggests that Apple may reveal new Siri-focused hardware designed to interpret the physical world, including:

– Smart Glasses: For real-time visual assistance and information overlay.

– Wearable Pendants: Compact devices for ambient audio and visual processing.

– Updated AirPods: Enhanced sensors to analyze and interpret the surrounding environment.

These products indicate that Apple is building an ecosystem where AI is not confined to the screen but extends into wearable technology, creating a more immersive and responsive user experience.

What This Means for Users

The combination of advanced Visual Intelligence and a smarter Siri represents a strategic pivot for Apple. By delaying some AI initiatives earlier in the year, the company appears to be focusing on quality and deep integration rather than rushed features. The result is a system that understands context—whether it’s recognizing a food label, adjusting camera settings via voice, or preparing for new wearable tech.

Key Takeaway: iOS 27 is not just an update; it’s a bridge to an AI-first future where the iPhone acts as an intelligent assistant for both visual and auditory tasks.

While Apple has not officially confirmed these features, the trajectory is clear. As the company prepares for WWDC 2026, users can expect a more seamless, intelligent, and connected iPhone experience that blurs the line between photography, communication, and artificial intelligence.