At the recent Google Cloud Next conference, Google signaled a major strategic shift: moving beyond simple AI assistants toward a future defined by “agentic AI.” The company unveiled a suite of updates designed to transform how enterprises operate, focusing on autonomous agents and the massive computing power required to run them.

The Rise of the “Agentic Enterprise”

While Google reports that 75% of its customers already utilize AI, the company is looking to move past basic integration in tools like Gmail or Docs. The new goal is the creation of the “agentic enterprise.”

Unlike standard AI, which requires constant human prompting, agentic AI refers to autonomous bots capable of completing complex, multi-step tasks with minimal supervision. This represents a significant evolution in the industry:

– From Assistants to Actors: Instead of just writing an email, an agent can manage a workflow, coordinate between different software applications, and execute tasks independently.

– Industry Trend: This follows a broader movement among tech leaders—including OpenAI and Anthropic—to transition AI from a conversational tool into a functional workforce capable of handling coding and administrative processes.

Building the Infrastructure: Gemini Enterprise Agents

To manage this shift, Google Cloud is introducing the Gemini enterprise agent platform. This serves as the central nervous engine for businesses, allowing them to oversee and coordinate various AI agents.

Key features of this rollout include:

– The Gemini Enterprise App: A dedicated interface for employees to interact with and deploy AI.

– New Agent Designer: A tool that allows users to build and schedule agents that can perform tasks across multiple different business applications.

– Security and Integration: CEO Thomas Kurian emphasized that these updates focus on ensuring agents are securely connected to a company’s internal data systems while optimizing for cost and performance.

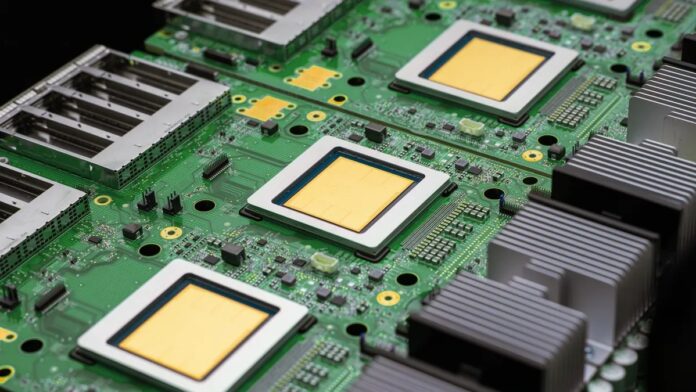

Powering the Future: The 8th Generation TPUs

Autonomous agents require immense computational strength. To meet this demand, Google announced two new eighth-generation Tensor Processing Units (TPUs), specifically engineered for high-intensity AI workloads.

Unlike general-purpose processors, these chips are specialized for the heavy lifting of artificial intelligence:

1. The 8T Chip (Training)

Designed for the “learning” phase of AI, the 8T chip is built to make training large models more efficient. Google claims it offers three times the processing power of its predecessor, the seventh-gen Ironwood.

2. The 8I Chip (Inference)

Designed for the “execution” phase—when an AI actually responds to a user—the 8I chip focuses on speed and memory. It boasts an 80% improvement in SRAM memory capacity and can be scaled into massive systems containing over 11,000 chips.

Summary: Google is attempting to bridge the gap between AI potential and enterprise reality by providing both the autonomous software (Gemini agents) and the specialized hardware (8th-gen TPUs) necessary to power a fully automated digital workforce.